MAVS

MAVS provides the ability to evaluate the performance of autonomous perception and navigation software in real-time. The software is built with an MPI-based framework for coupling parallel processes, as well as a physics-based sensor simulator for LIDAR, GPS, cameras and other sensors.

While MAVS is a fully functional standalone simulator, additional wrappers allow MAVS to be integrated with robotic development tools such as the Robotic Operating System (ROS).

MAVS is available under the MIT license. For more information, contact mavs-support@cavs.msstate.edu.Why use simulation for autonomous ground vehicle testing? The image above displays the many interactions that are involved in developing a simulation. Controlling these factors contributes greatly to experimental safety and cost effectiveness, repeatability, and the ability to automatically perform thousands of experiments.

What importance does MAVS bring to simulation software?

- Optimized for off-road activity: MAVS uses a validated tire-soil interaction model for sand and clay and uses a validated lidar-vegetation interaction model

- Optimized for large-scale HPC simulation: MAVS automatically generates new off-road terrain, and natively runs on all flavors of Unix/Linux , as well as Windows

- Optimized for ease of use: MAVS interfaces with ROS and has a 0-install python scripting interface

While development is ongoing, the MAVS software is currently being used for applications including sensor placement, synthetic training-data for neural networks, and education efforts. The MAVS software can also be used for autonomy system-level testing.

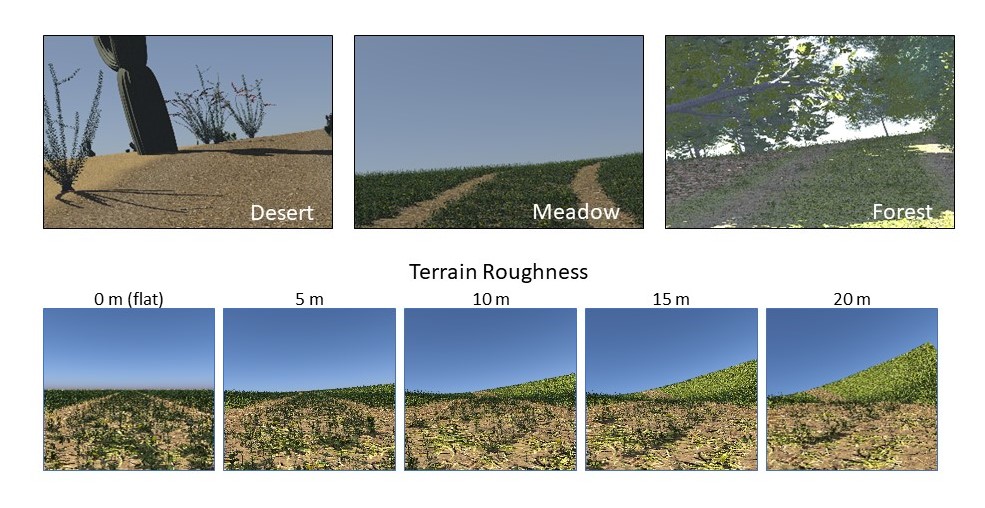

Screen captures from MAVS simulations that show the same environment with differing ecosystems (top) and with a change in terrain roughness (bottom).

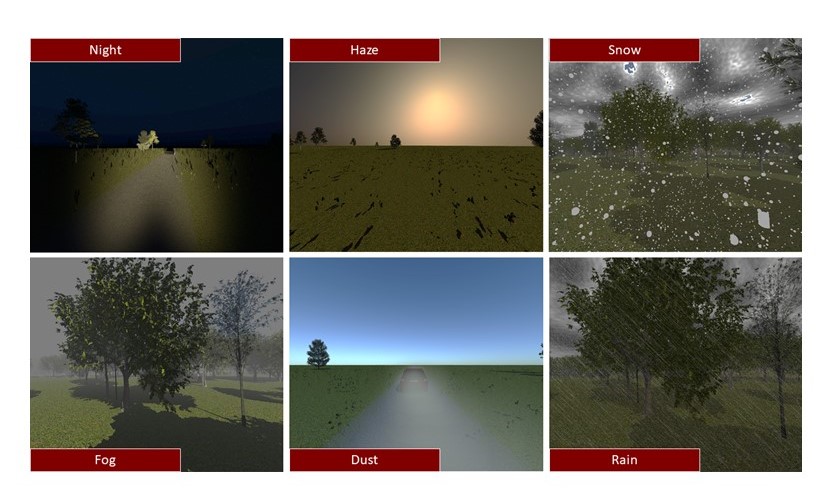

Screen captures from MAVS software exhibiting the following variety of environment properties (from L to R): night, haze, snow, fog, dust, and rain.

The environment within MAVS can demonstrate a variety of custom ecosystems and natural properties, including snow, fog, dust and rain. Terrain properties and roughness can be controlled, as well as daylight brightness, haze, and moonlight.